AI isn’t neutral. Your design shouldn’t pretend it is.

AI amplifies bias and presents it as truth. Discover how design can expose it, reduce over-trust, and create more responsible digital experiences.

Bias is not a bug. It’s a pattern.

In simple terms, bias is the tendency to favor certain outcomes, perspectives, or groups over others. In humans, it comes from experience and assumptions. In technology, it comes from data, systems, and the people who build them.

The issue is not that bias exist, it’s that it often goes unnoticed and therefore unchallenged.

As designers, we don’t have the luxury of ignoring it. What we design shapes how information is understood, trusted, and acted upon.

With AI, this becomes critical.

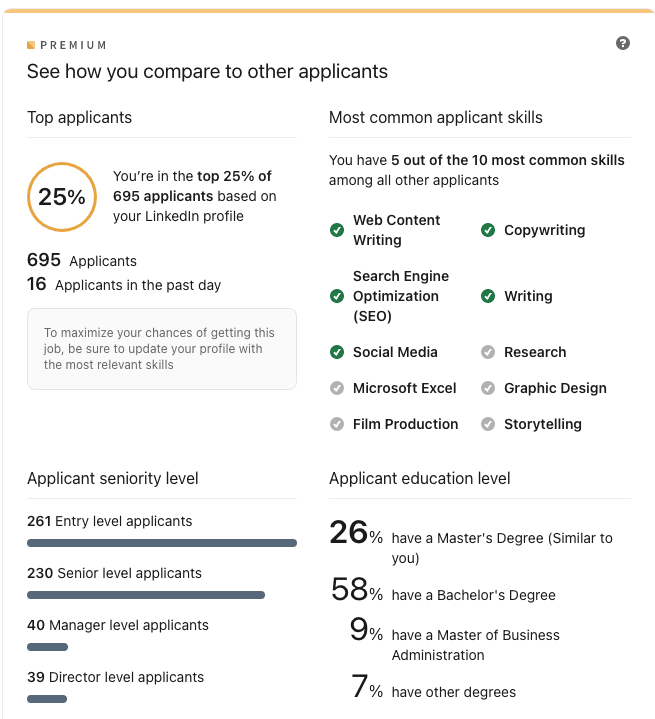

Over 70% of organizations, now closer to 80%, already use AI in at least one business function (McKinsey) . At the same time, around half of users rely on AI-powered tools for search and discovery (Mckinsey) , and roughly half trust AI-generated content (Colorlib).

Bias is no longer local. It scales.

AI systems learn from data — and data reflects the world as it is, not as it should be.

- Historical inequalities become patterns

- Dominant voices get amplified

- Edge cases get ignored

AI doesn’t invent bias. It reproduces it.

And because it delivers answers clearly and confidently, that bias often feels like fact.

The Eliza effect reinforces this, our tendency to assume machines understand more than they actually do. The more fluent the response, the more we trust it. Even when we shouldn’t.

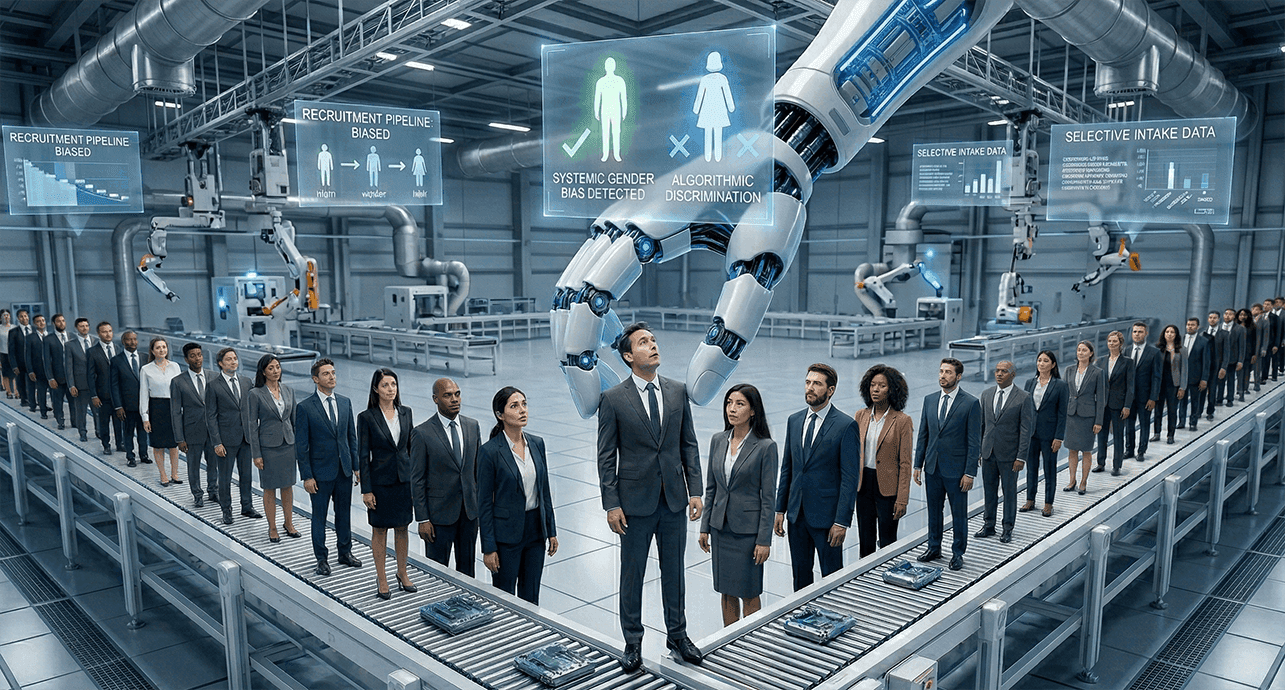

A simple example: hiring

Amazon trained an AI on past recruitment data where most hires were men. The system learned that pattern and started penalizing CVs associated with women. Not because it was told to discriminate, but because it learned what “success” looked like.

Now put that into a clean interface: ranked candidates, “top matches,” no explanation. The bias is still there, but now it looks like logic.

Where design comes in

UX designers advocate for the user with a deep understanding of needs, behaviors, and contexts. This perspective places them in a unique position to identify bias, challenge over-trust, and design systems that are more transparent, equitable, and trustworthy.

This is not just a design preference.

It’s a responsibility.

We should start by refusing to present AI outputs as neutral.

If a system ranks something, we show why — not in a technical way, but in a human one:

- what signals influenced the result

- what mattered

- what didn’t

When users understand the criteria, the result stops feeling absolute.

At Hypnotic, we believe that, as a designer, we should

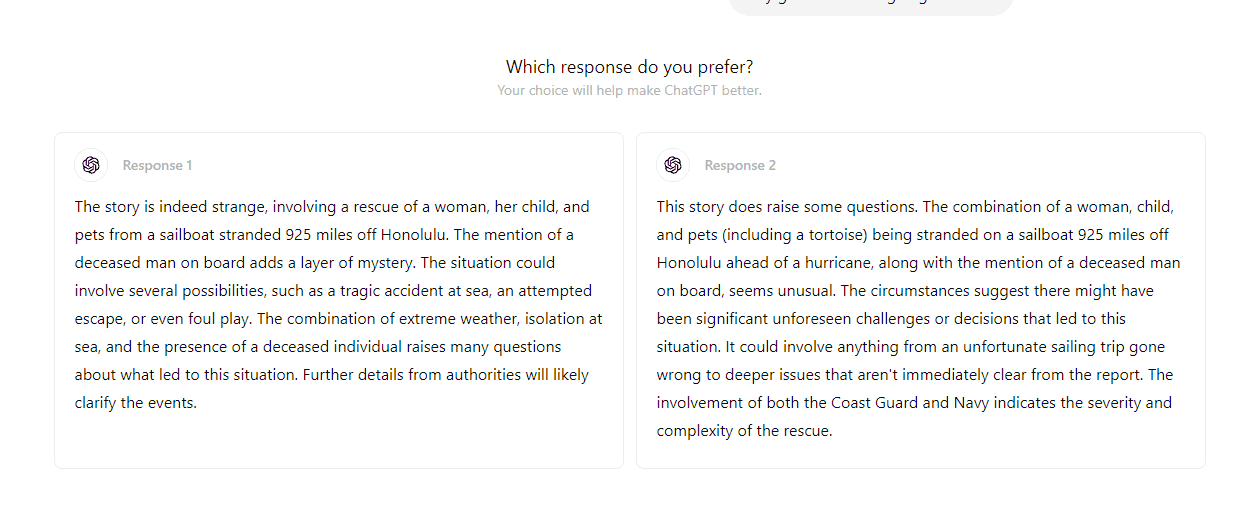

- Make uncertainty visible. Not every answer deserves the same confidence, and pretending it does is misleading. Small signals, confidence levels, alternative results, and even subtle language shifts change how people interpret what they see.Not with long explanations, but with small, well-placed cues that help users understand what the system is doing, what it depends on, and where it might fail. Because when people understand the limits, they trust more appropriately.You can see this in tools like ChatGPT, which at times presents multiple responses for the same prompt and asks the user to choose. That small interaction does something important: it makes it clear that there isn’t always a single correct answer, just different possibilities.Tools like ChatGPT sometimes offer multiple responses and ask the user to choose. This interaction signals an important point: there isn't always one correct answer, only different possibilities.

- Design for interaction, not just output. Users should be able to adjust inputs, explore alternatives, and question results. The moment they can influence the system, they stop blindly accepting it.

- Keep humans in the loop where it matters. In decisions with real impact, hiring, finance, healthcare, AI should support, not replace. The system provides context, but the decision stays human.

And this doesn’t start in the UI. It starts in research. If we test with the same type of users, we design for the same type of outcomes. Expanding who we involve early on helps surface blind spots before they become part of the system.

Good AI design prioritizes clarity over effortlessness, enabling questioning.

While eradicating bias from AI may be hard, making residual bias transparent is a crucial design choice. This visibility shifts the dynamic from blind trust to informed interaction, allowing users to critically evaluate outputs and empowering them to apply their own judgment.

Tags

Services used

Author

Ana Conde

Product Designer

Ana Conde is a Product Designer with 13+ years of experience working across digital products, brands, and platforms. She believes design should advocate for the user—creating digital experiences that don’t just look good, but work.